This post appeared originally in our sysadvent series and has been moved here following the discontinuation of the sysadvent microsite

This post appeared originally in our sysadvent series and has been moved here following the discontinuation of the sysadvent microsite

When we installed the new frontend nodes for our main site, we wanted make use of some technologies that aren’t yet in broad use by our customers. The intention was both to gain more experience with said technologies, and to show that they are ready for production use. HTTP/2 was one of these technologies.

HTTP/2 offers several features that improve the load speed of pages. To quote the HTTP/2 FAQ, the new version of the protocol:

While the setup on this blog does not make use of the “push” functionality, the rest of the features result in a faster loading site for most clients, as well as fewer connections per second on the server hosting it.

A (very HTTP/2 friendly) demo can be observed at akamai.com/1. Akamai has also made a video with a lot of observations and comparisons on real-world HTTP/2 use.

Note that HTTP/2 is still a fairly young technology. Some teething issues are to be expected as more sites start using it, uncovering problems with the server implementations in the process. CVE-2016-8740 and CVE-2016-1546 are examples of such issues.

In our case the choice was mainly between Varnish and Nginx. As the HTTP/2 support in Varnish 5.0 is still experimental, we decided to go with Nginx for now. Since Varnish is already a component of the site, we’ll probably switch to that when HTTP/2 becomes fully supported in it.

For a full list of HTTP/2 enabled servers, look at the entries of the HTTP/2 implementations list with “server” or “proxy” in the role column.

HTTP Protocol negotiation can be done through two protocols, Next Protocol Negotiation (NPN) and it’s successor Application-Layer Protocol Negotiation (ALPN). The latter is the only negotiation protocol supported by HTTP/2, but it’s common for browsers to also support NPN since it was used by SPDY (the precursor of HTTP/2). This support is, however, temporary in the case of chrome (and possibly others).

In other words, if you want to make sure that your server will be HTTP/2 enabled also in the future, verify that it uses a new enough TLS implementation to support ALPN. For daemons using OpenSSL, they need at least version 1.0.2. The ALPN protocol page at Wikipedia has a list of which versions of other libraries have the required support.

Nginx has HTTP/2 support in version 1.9.5+. As Ubuntu 16.04 currently has version

1.10.0 in its repositories, we just needed to do an apt install nginx to install it.

Ubuntu 16.04 also uses OpenSSL version 1.0.2, so we’ve also got the required

ALPN support out of the box.

After installation, the only additional configuration required for HTTP/2

support was to add http2 as a listen directive in the relevant virtual-host

file(s) under /etc/nginx/sites-enabled/:

listen [::]:443 default_server ssl http2 ipv6only=off;

The default cipher list in Nginx allows a lot of ciphers/combinations that are quite weak. A good source of sane cipher lists is the Mozilla wiki. We prefer the intermediate level for for a public web like this. To achieve this, we add cipher settings to the same virtual-host file(s) as above:

ssl_protocols TLSv1 TLSv1.1 TLSv1.2;

ssl_ciphers "ECDHE-ECDSA-CHACHA20-POLY1305:ECDHE-RSA-CHACHA20-POLY1305:ECDHE-ECDSA-AES128-GCM-SHA256:ECDHE-RSA-AES128-GCM-SHA256:ECDHE-ECDSA-AES256-GCM-SHA384:ECDHE-RSA-AES256-GCM-SHA384:DHE-RSA-AES128-GCM-SHA256:DHE-RSA-AES256-GCM-SHA384:ECDHE-ECDSA-AES128-SHA256:ECDHE-RSA-AES128-SHA256:ECDHE-ECDSA-AES128-SHA:ECDHE-RSA-AES256-SHA384:ECDHE-RSA-AES128-SHA:ECDHE-ECDSA-AES256-SHA384:ECDHE-ECDSA-AES256-SHA:ECDHE-RSA-AES256-SHA:DHE-RSA-AES128-SHA256:DHE-RSA-AES128-SHA:DHE-RSA-AES256-SHA256:DHE-RSA-AES256-SHA:ECDHE-ECDSA-DES-CBC3-SHA:ECDHE-RSA-DES-CBC3-SHA:EDH-RSA-DES-CBC3-SHA:AES128-GCM-SHA256:AES256-GCM-SHA384:AES128-SHA256:AES256-SHA256:AES128-SHA:AES256-SHA:DES-CBC3-SHA:!DSS";

ssl_dhparam /etc/sslcerts/dhparam.pem;

ssl_prefer_server_ciphers on;

The Mozilla wiki has a good explanation of why you need a dhparam file, and its contents.

A couple of basic tests can be done to verify that the site is now up and

running with HTTP/2. First, let’s verify that clients are allowed to negotiate

HTTP/2 (or h2 as it’s usually referred to by software). Make sure you’ve got

OpenSSL version 1.0.2 or above, as earlier versions do not support ALPN:

$ openssl s_client -connect www.redpill-linpro.com:443 -alpn 'h2' </dev/null 2>&1 | grep ALPN

ALPN protocol: h2

OpenSSL says that we can negotiate an HTTP/2 connection – so far so good. Note

that we do not use -nextprotoneg to test, as that would test the NPN protocol

instead of the ALPN protocol.

Next we want to try an HTTP/2 request. Most command line clients in Ubuntu 16.04

are still without the required support, but the nghttp project (an HTTP/2

library) also has a client available:

$ sudo apt -y install nghttp2-client

...

$ nghttp --null-out --verbose https://www.redpill-linpro.com

[ 0.005] Connected

The negotiated protocol: h2

...

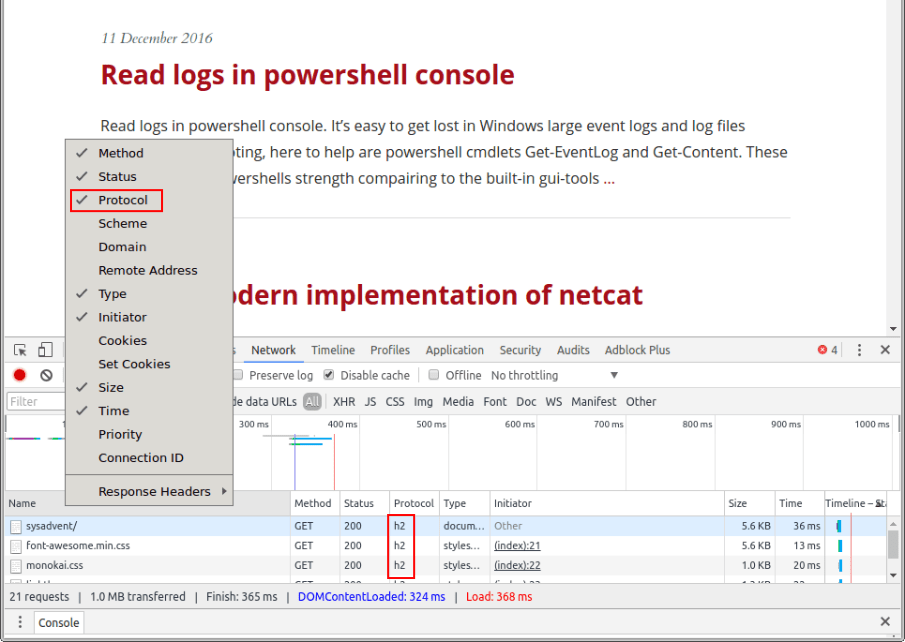

The protocol can also be checked in Chrome, as the Network tab in its developer

panel (accessed through <F12>) has a “Protocol” column. Just right-click any

of the column titles to display the menu where you can enable it:

All of our test return good results. If they hadn’t, Cloudflare has an excellent blog post about further debugging and testing.

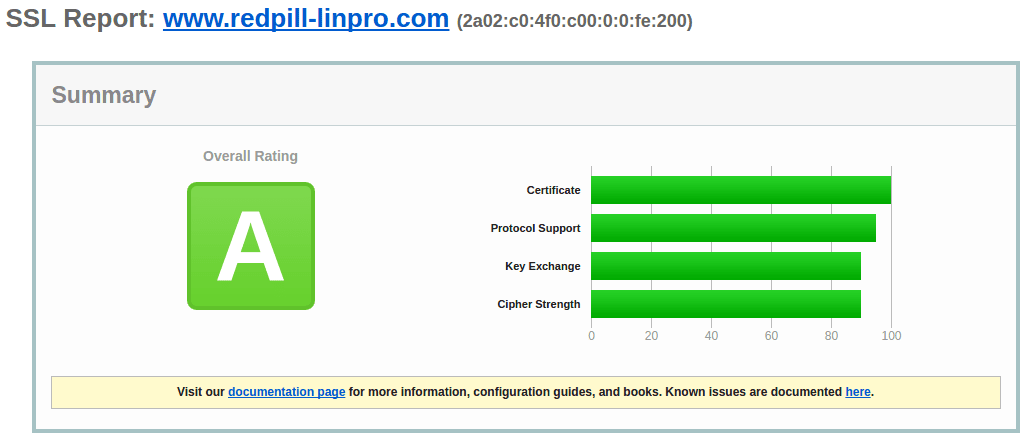

We’ve now ended up with an A rating at ssllabs.com/ssltest, with IE6 on Windows XP and Java 6 being the only clients that fail, and all newer browsers using h2.

The Cipherscan tool mentioned in an earlier blog entry is also quite happy:

$ ./analyze.py -t www.redpill-linpro.com:443 -l intermediate

www.redpill-linpro.com:443 has intermediate ssl/tls

and complies with the 'intermediate' level

Changes needed to match the intermediate level:

* consider using DHE of at least 2048bits and ECC of at least 256bits

* consider enabling OCSP Stapling

We haven’t enabled OCSP stapling (yet), and the DHE message is a false positive as we’ve actually got a 4Kb DHE. The scan does, however, confirm that the cipher suite is good.

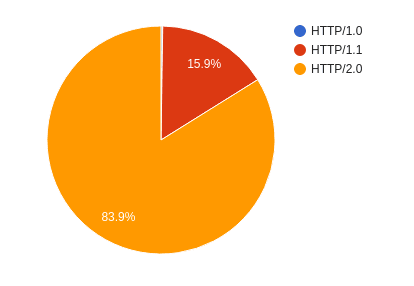

Since most web browsers have fully supported HTTP/2 for a while now, the observed protocol usage for December is pretty much as expected:

HTTP/1.0 is only at 0.24%, making it almost invisible in the chart. It almost exclusively consists of crawlers and bots. The bulk of HTTP/1.1 also seems to be crawlers/bots, with a decent chunk of older mobile devices that haven’t had browser updates in a while thrown into the mix.

The demo website for http2 has been shutdown in 2023/2024. The link had been removed. ↩

For those who are unfamiliar with the word “luddite”, it was an organized movement of unemployed textile workers being against progress and sabotaging equipment like the power loom in the post banner. We don’t do sabotage, but we’re also not unemployed … yet! On the other hand, many of us seem to be struck by the Ostrich effect.

It proved difficult to find an image of an ostrich with the head ... [continue reading]