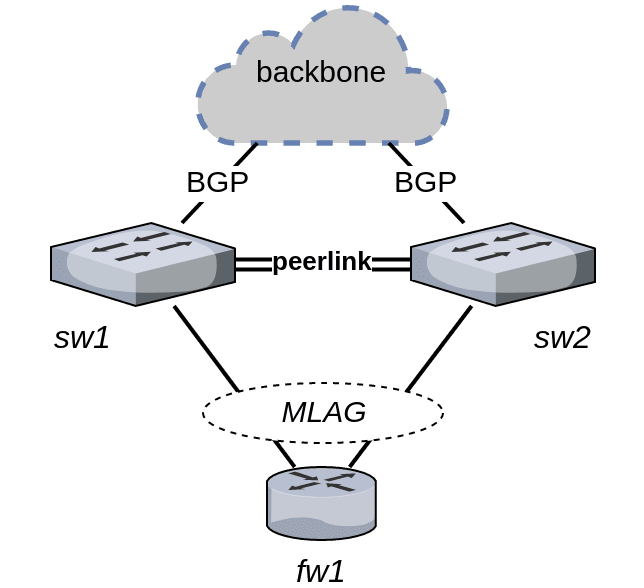

We build our network in order to simultaneously achieve high availability and maximum utilisation of available bandwidth. To that end, we are using Multi-Chassis Link Aggregation (MLAG) between our data centre switches running Cumulus Linux and our firewall cluster «on a stick»:

fw1 is tricked into believing it is dual connected to a single device that speaks the IEEE 802.3ad Link Aggregation Control Protocol.

This works great for layer 2 and provides active/active load balancing of fw1’s outbound traffic.

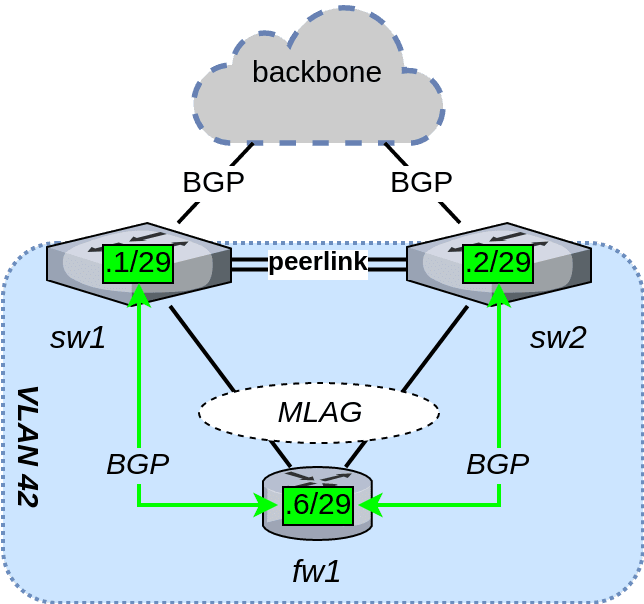

Bringing layer 3 routing into the mix complicates matters. While an MLAG switch pair behaves as a single device from a layer 2 perspective, the switches remain independent entities on layer 3. For this reason, fw1 is required to maintain two BGP sessions – one session to each switch. We facilitate this by creating a link VLAN where the firewall and both switches are connected, as shown below. Each switch enables a layer 3 switch virtual interface (SVI) in the link VLAN.

MLAG + ECMP considered harmful

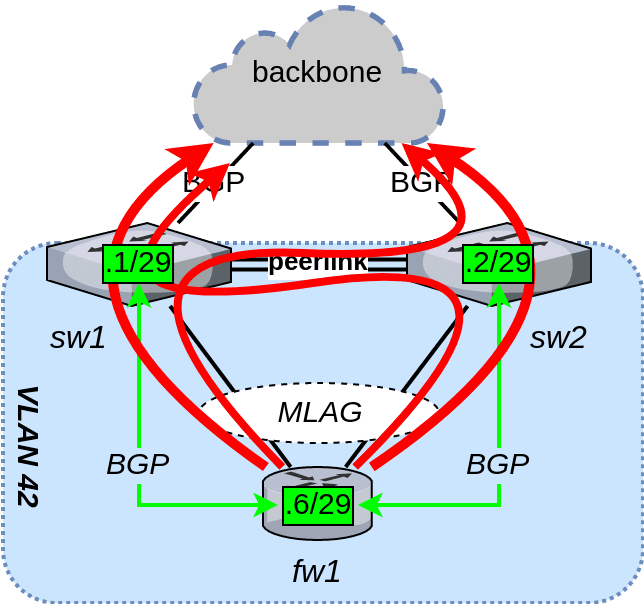

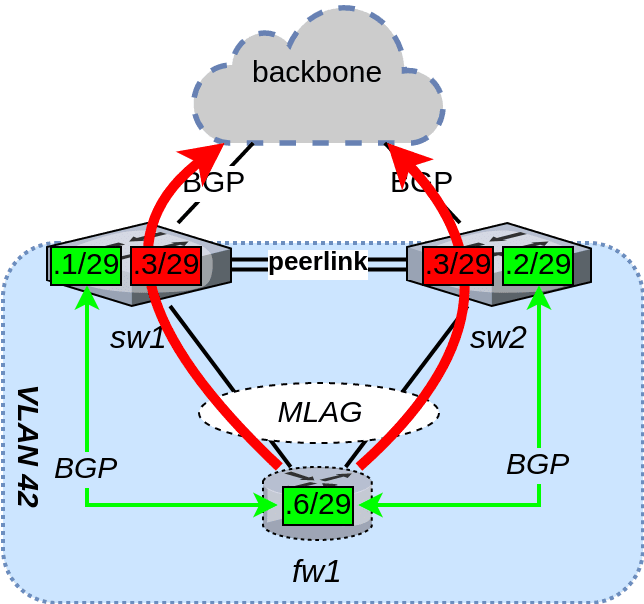

If fw1 had been connected with two pure layer 3 up-links to the switches (i.e., no MLAG), then we would normally have used ECMP to load balance its outgoing traffic. While that approach does ostensibly work with MLAG too, it causes problems further down the line. The two load balancing methods in play here – 802.3ad and ECMP – are totally unaware of each other. A packet that ECMP decided to load balance towards sw1’s SVI might actually end up being transmitted to sw2 first, because that is the LAG member 802.3ad load balanced it to (or vice versa).

We would therefore end up in a situation where about 50% of the outgoing traffic from fw1 is sent to the wrong switch and has to cross the peer-link between the two switches, as shown below. Although it will work, is of course not very efficient.

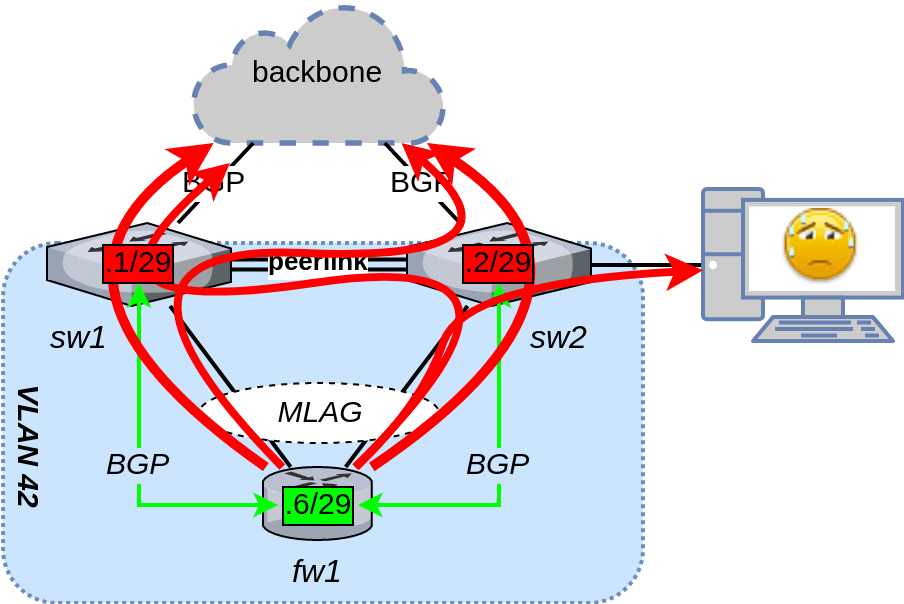

Unfortunately, that is not the only issue. Cumulus Linux’s MLAG implementation disables MAC learning on the peer-link. All the misdirected traffic will be flooded as unknown unicast. If either of the switches has another unrelated VLAN trunk with all VLANs active (i.e., no pruning), fw1’s misdirected traffic will be flooded to that link as well, possibly saturating it and/or causing high CPU usage on one or more unrelated devices:

Using ECMP for layer 3 load balancing on an MLAG link is simply out of the question. What now?

VRR and BGP to the rescue

Fortunately, Cumulus Linux implements another layer 3 load balancing method that saves the day. This is called Virtual Router Redundancy, or VRR for short.

It is a simple and elegant solution. It works by having both switches create a secondary VRR SVI on the link VLAN. This secondary SVI has the same IP and MAC addresses on both switches. Thus, when fw1 transmits a packet destined for the VRR SVI, it will never have to be routed across the MLAG peer-link because the destination is local to both switches.

The VRR SVI can not be used to terminate a BGP session, because a TCP session requires both endpoints to be unique. Therefore, fw1 will still need to speak BGP directly with the primary SVIs on sw1 and sw2.

The remaining piece of the puzzle is to use the built-in capability of the BGP protocol to signal a non-default next-hop for each of the advertised routes: the VRR SVI. This is easily done in Cumulus Linux / FRRouting using a route-map, and results in fw1’s outbound traffic taking the ideal path through the network:

The resulting routing can be seen by queering the advertisements received by the BIRD BGP daemon running on fw1:

root@fw1:~# birdc show route

BIRD 1.5.0 ready.

0.0.0.0/0 via 192.0.2.3 on bond0 [sw1 11:46:56 from 192.0.2.1] * (100) [AS39039i]

via 192.0.2.3 on bond0 [sw2 11:46:57 from 192.0.2.2] (100) [AS39039i]

root@fw1:~# birdc6 show route

BIRD 1.5.0 ready.

::/0 via 2001:db8::3 on bond0 [sw1 11:46:56 from 2001:db8::1] * (100) [AS39039i]

via 2001:db8::3 on bond0 [sw2 11:46:57 from 2001:db8::2] (100) [AS39039i]

Success! It has learned a redundant pair of IPv4/IPv6 default routes from the switches, one from each switch. Since the next-hop is the VRR SVI that is shared between both switches, the traffic destined for the backbone (from which the default routes originate in the first place) will never have to cross the peer-link – exactly what we set out to achieve in the first place.

(Packets that are part of the two BGP sessions themselves might still be forwarded across the peer-link and cause unknown unicast flooding, as discussed earlier. Fortunately, a BGP session only causes a minute amount of traffic, so this is not really a problem worth worrying about.)

Configuration files

I’m including the basic configuration files necessary to set up the topology in the figures, in case you want to try this out for yourself. It can be done in a virtual lab environment using Cumulus VX too, so there is no need to buy any lab hardware.)

sw1

sw1: /etc/network/interfaces

# Unrelated inferfaces removed

auto swp1

iface swp1

address 192.0.2.253/31

address 2001:db8:a::1/127

alias uplink to backbone

auto swp42

iface swp42

alias downlink to fw1 port eth0

auto swp47

iface swp47

alias peerlink LAG member 1

auto swp48

iface swp48

alias peerlink LAG member 2

auto bridge

iface bridge

bridge-ports mlag-fw1 peerlink

bridge-vids 42

bridge-vlan-aware yes

auto mlag-fw1

iface mlag-fw1

bond-slaves swp42

bridge-pvid 42

clag-id 42

mstpctl-bpduguard yes

mstpctl-portadminedge yes

auto peerlink

iface peerlink

bond-slaves swp47 swp48

auto peerlink.4094

iface peerlink.4094

address 169.254.1.1/30

alias MLAG state synchronisation interface

clagd-peer-ip 169.254.1.2

clagd-priority 16384

clagd-sys-mac 44:38:39:ff:10:ff

auto vlan42

iface vlan42

address 192.0.2.1/29

address 2001:db8::1/64

address-virtual 00:00:5E:00:01:2A 192.0.2.3/29 2001:db8::3/64

alias Layer 3 link network between sw1/sw2/fw1

vlan-id 42

vlan-raw-device bridge

sw1: /etc/frr/frr.conf

frr version 3.2+cl3u3

frr defaults datacenter

hostname sw1

username cumulus nopassword

!

service integrated-vtysh-config

!

log syslog informational

!

router bgp 65001

bgp router-id 192.0.2.1

no bgp default ipv4-unicast

coalesce-time 1000

neighbor 192.0.2.6 remote-as 65006

neighbor 192.0.2.6 description downlink to fw1

neighbor 192.0.2.252 remote-as 39039

neighbor 192.0.2.252 description uplink to backbone

neighbor 2001:db8::6 remote-as 65006

neighbor 2001:db8::6 description downlink to fw1

neighbor 2001:db8:a:: remote-as 39039

neighbor 2001:db8:a:: description uplink to backbone

!

address-family ipv4 unicast

neighbor 192.0.2.6 activate

neighbor 192.0.2.6 route-map next-hop-vrr out

neighbor 192.0.2.252 activate

exit-address-family

!

address-family ipv6 unicast

neighbor 2001:db8::6 activate

neighbor 2001:db8::6 route-map next-hop-vrr out

neighbor 2001:db8:a:: activate

exit-address-family

!

route-map next-hop-vrr permit 10

set ip next-hop 192.0.2.3

set ipv6 next-hop global 2001:db8::3

!

line vty

!

sw2

sw2: /etc/network/interfaces

# Unrelated interfaces removed

auto swp1

iface swp1

address 192.0.2.255/31

address 2001:db8:b::1/127

alias uplink to backbone

auto swp42

iface swp42

alias downlink to fw1 port eth1

auto swp47

iface swp47

alias peerlink LAG member 1

auto swp48

iface swp48

alias peerlink LAG member 2

auto bridge

iface bridge

bridge-ports mlag-fw1 peerlink

bridge-vids 42

bridge-vlan-aware yes

auto mlag-fw1

iface mlag-fw1

bond-slaves swp42

bridge-pvid 42

clag-id 42

mstpctl-bpduguard yes

mstpctl-portadminedge yes

auto peerlink

iface peerlink

bond-slaves swp47 swp48

auto peerlink.4094

iface peerlink.4094

address 169.254.1.2/30

alias MLAG state synchronisation interface

clagd-peer-ip 169.254.1.1

clagd-priority 32768

clagd-sys-mac 44:38:39:ff:10:ff

auto vlan42

iface vlan42

address 192.0.2.2/29

address 2001:db8::2/64

address-virtual 00:00:5E:00:01:2A 192.0.2.3/29 2001:db8::3/64

alias Layer 3 link network between sw1/sw2/fw1

vlan-id 42

vlan-raw-device bridge

sw2: /etc/frr/frr.conf

frr version 3.2+cl3u3

frr defaults datacenter

hostname sw2

username cumulus nopassword

!

service integrated-vtysh-config

!

log syslog informational

!

router bgp 65002

bgp router-id 192.0.2.2

no bgp default ipv4-unicast

coalesce-time 1000

neighbor 192.0.2.6 remote-as 65006

neighbor 192.0.2.6 description downlink to fw1

neighbor 192.0.2.254 remote-as 39039

neighbor 192.0.2.254 description uplink to backbone

neighbor 2001:db8::6 remote-as 65006

neighbor 2001:db8::6 description downlink to fw1

neighbor 2001:db8:b:: remote-as 39039

neighbor 2001:db8:b:: description uplink to backbone

!

address-family ipv4 unicast

neighbor 192.0.2.6 activate

neighbor 192.0.2.6 route-map next-hop-vrr out

neighbor 192.0.2.254 activate

exit-address-family

!

address-family ipv6 unicast

neighbor 2001:db8::6 activate

neighbor 2001:db8::6 route-map next-hop-vrr out

neighbor 2001:db8:b:: activate

exit-address-family

!

route-map next-hop-vrr permit 10

set ip next-hop 192.0.2.3

set ipv6 next-hop global 2001:db8::3

!

line vty

!

fw1

fw1: /etc/network/interfaces

# unrelated interfaces removed

auto eth0

iface eth0 inet manual

bond-master bond0

auto eth1

iface eth1 inet manual

bond-master bond0

auto bond0

iface bond0 inet static

address 192.0.2.6

netmask 255.255.255.248

bond-miimon 100

bond-mode 802.3ad

bond-xmit-hash-policy encap3+4

bond-slaves none # Ubuntu sure is weird

iface bond0 inet6 static

address 2001:db8::6

netmask 64

fw1: /etc/bird/bird.conf

router id 192.0.2.6;

protocol kernel {

scan time 60;

import none;

export all;

}

protocol device {

scan time 60;

}

protocol bgp sw1 {

local as 65006;

neighbor 192.0.2.1 as 65001;

}

protocol bgp sw2 {

local as 65006;

neighbor 192.0.2.2 as 65002;

}

fw01: /etc/bird/bird6.conf

router id 192.0.2.6;

protocol kernel {

scan time 60;

import none;

export all;

}

protocol device {

scan time 60;

}

protocol bgp sw1 {

local as 65006;

neighbor 2001:db8::1 as 65001;

}

protocol bgp sw2 {

local as 65006;

neighbor 2001:db8::2 as 65002;

}

Update

- 2024-01-29: Updated dead links.

- 2025-09-03: Format